Content

Why Multi-User AI Agents Get Complicated Fast

Most AI agent demos feel magical right up until the moment you try to use them with an actual team. That is where the clean little demo turns into a real product problem. The second more than one person can talk to the same agent, you are no longer building a toy. You are designing an access system.

This is the part a lot of people skip. They obsess over model quality, response latency, and tool use. Meanwhile the real question is much simpler and much harder: who is allowed to see what, trigger what, and act on what?

If your AI agent can access pricing, contracts, customer history, internal docs, meeting notes, or finance data, you cannot just toss it into a shared environment and hope the prompt behaves. That is not architecture. That is wishful thinking.

The moment an agent becomes multi-user, the problem changes

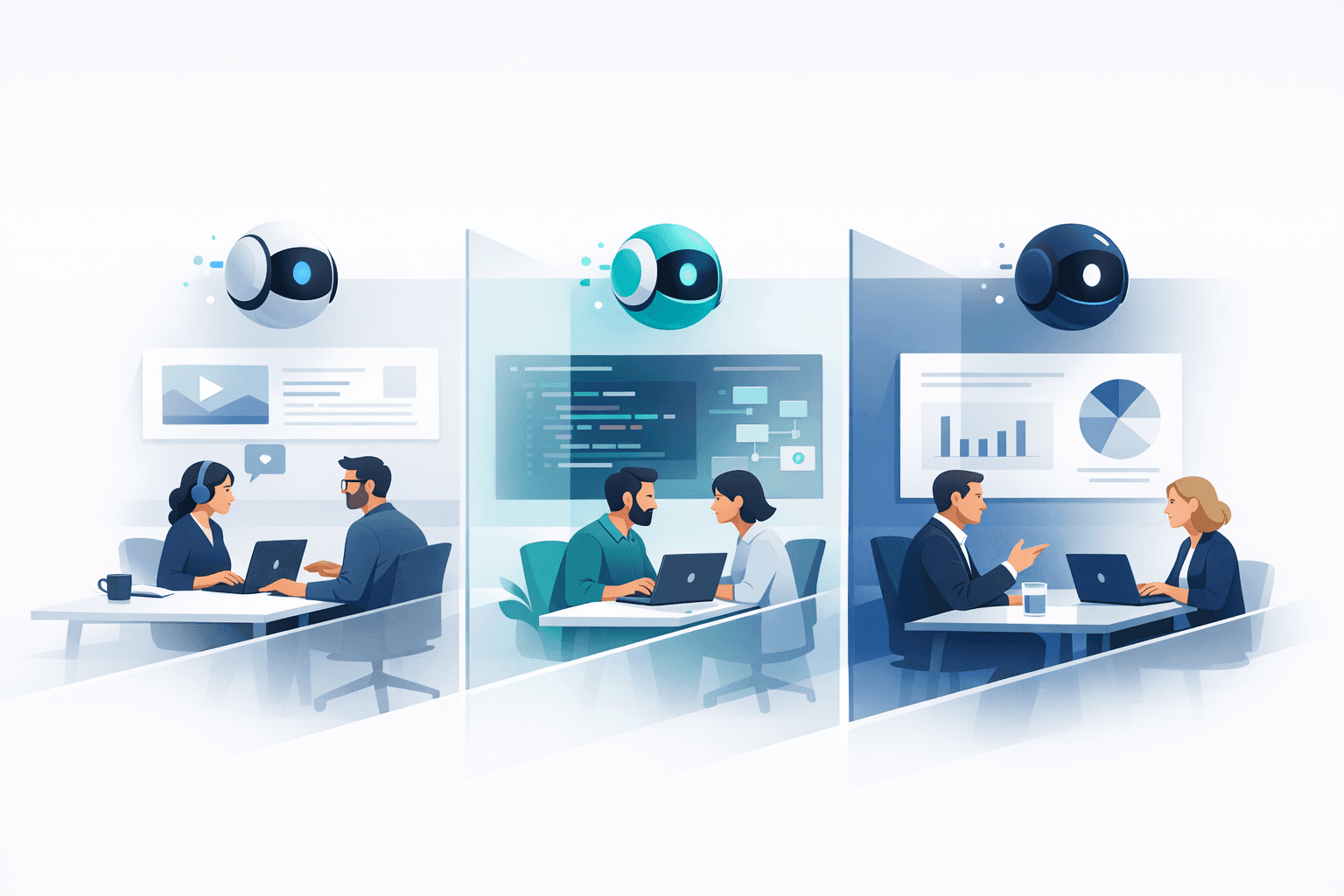

A personal AI assistant is one thing. A team-facing AI agent is another. In a single-user setup, the trust model is straightforward. The agent works for one person, uses one person's tools, and operates inside one person's permission boundary. That is manageable.

In a business, the moment multiple employees, contractors, or clients interact with the same agent, everything gets messier. Sales should not see the same data as finance. Clients should not have visibility into your internal pricing logic. One account manager should not be able to query another account manager’s conversations just because the model happens to have access to the same backend.

This is exactly why multi-user agents get complicated fast. The challenge is not only what the model can answer. The challenge is what the system should ever allow it to know in the first place.

There are really only two ways to build this

The first way is to enforce restrictions in the prompt. You tell the model not to reveal certain data. You tell it to ignore sensitive sources for certain users. You add little rules that say things like do not mention internal pricing, do not expose private client information, do not retrieve confidential records unless the user is authorized.

This approach is popular because it is fast. It also feels clever. Unfortunately, it is not a security boundary.

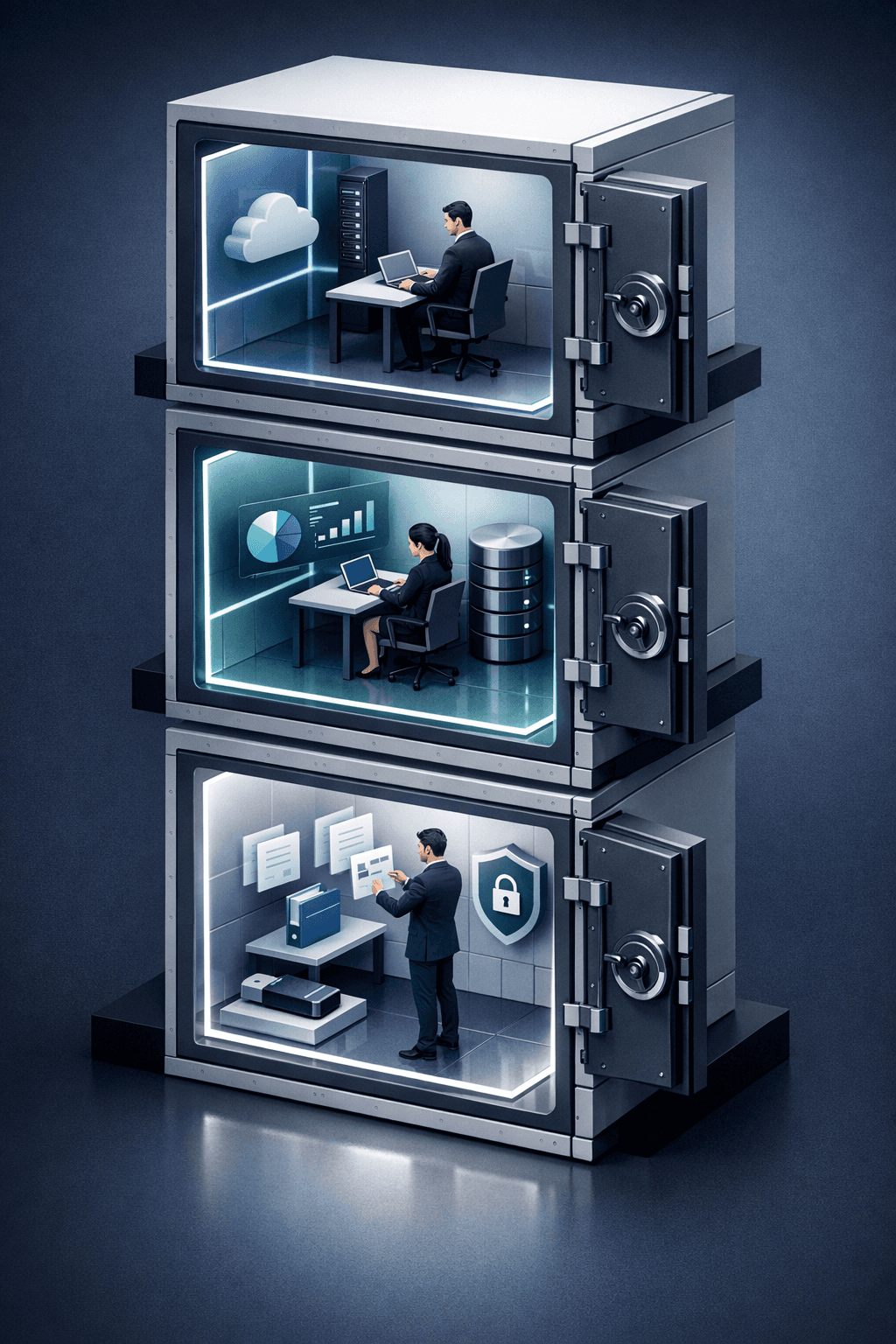

The second way is to build the restriction into the design of the agent itself. In that model, the system decides what data, tools, and actions are available before the model ever starts reasoning. The model is not asked to politely avoid forbidden territory. It simply does not have the keys to walk into it.

That distinction is everything. One is a suggestion written in natural language. The other is an actual boundary.

Why prompt-level security breaks

Large language models are extraordinarily useful, but they are not permission systems. They do not naturally separate trusted instructions from untrusted instructions with the rigor a real security architecture requires. If the wrong content enters the context window, or the wrong user frames the request the right way, the model can be manipulated into revealing, retrieving, or acting in ways you never intended.

People call this prompt injection, prompt hijacking, or jailbreaks, but the label is almost beside the point. The real issue is that a prompt is still text. Text is soft. Text can be overridden, reframed, poisoned, or confused. If your entire access-control strategy depends on text winning an argument inside a model, you are eventually going to lose that argument.

This matters even more when tools are involved. The dangerous part of an agent is rarely the sentence it writes. The dangerous part is what it can do. If it has broad access to your CRM, files, calendars, pricing docs, or internal systems, a failure is no longer an awkward answer. It becomes a data leak, a policy violation, or a customer trust disaster.

What architecture-level control actually looks like

A real multi-user agent needs scoped access at the system level. That means every user session should inherit the right identity, the right permissions, and the right data boundaries. It means the model should only be able to call tools that are available for that specific person, in that specific context, for that specific role.

In practice, this means designing for least privilege from day one. A client-facing workspace should not have access to internal pricing logic. A support agent should not inherit founder-level visibility. A contractor should not get broad retrieval privileges just because they are in the same Slack channel as the founder. Every access path needs to be deliberate, scoped, and enforceable.

It also means isolation. Different teams, customers, and contexts should not bleed into each other. The cleanest multi-user agent systems are not the ones with the longest prompts. They are the ones with the clearest boundaries.

And then there is auditability. If an agent can trigger workflows, pull data, or draft sensitive outputs, you need to know who asked for what, what the system accessed, and why a specific action happened. Without that, you do not have operational confidence. You have vibes.

This is where most agent platforms quietly fall apart

A lot of agent frameworks were effectively built with a personal-assistant trust model. That is fine if the agent is serving one person inside one environment. It is not fine when you try to stretch that same foundation across a team, let alone across clients.

That is the core issue here. OpenClaw and similar approaches can be impressive for individual use. But when you try to make them behave like a secure multi-user system, you start fighting the architecture. You end up layering prompts on top of prompts, patching over holes, and hoping nobody asks the one question that exposes the whole setup.

Founders feel this pain quickly because SMBs do not have time for elegant security theater. If your client can accidentally discover that somebody else got a better rate, or your team can query data they were never meant to access, the problem is not theoretical anymore. It is commercial.

Why we built Notis differently

At Notis, we treated this as a product design problem from the beginning. Not as a prompt-writing exercise. Not as a workaround. A real agent for a real business has to understand context, yes, but it also has to respect boundaries. That means scoping what each user can access, what each automation can trigger, and what each workflow can see.

The point is not to make the model more obedient. The point is to make the system more trustworthy. When access control lives in the architecture, the model can be useful without becoming reckless. That is the standard businesses should demand.

This is also why I think the market is still underestimating what it means to deploy agents seriously. Everybody wants autonomous workflows. Very few people want to talk about permission boundaries, approval layers, and audit trails. But those are exactly the things that make autonomy viable outside a demo.

What SMB founders should ask before adopting any agent platform

If you are evaluating an AI agent for your team, do not stop at the demo. Ask what happens when multiple users with different roles interact with the system. Ask whether tool access is scoped per user or shared behind the scenes. Ask whether client data is isolated. Ask whether actions are traceable. Ask whether approvals exist for high-risk workflows.

If the answer is basically do not worry, the prompt handles it, keep digging.

Because in the real world, the quality of an AI product is not defined by how impressive it looks when everything goes right. It is defined by how safely it behaves when somebody asks the wrong thing, has the wrong permissions, or tries to push it past its intended boundary.

Architecture first, or regret it later

Multi-user AI agents are powerful. They can absolutely transform how SMBs operate. But the second you move beyond a single trusted user, you need to stop thinking like a prompt engineer and start thinking like a systems designer.

That is the real dividing line in this market. Some products treat access control as a sentence inside the prompt. Others treat it as a core part of the architecture. Only one of those approaches survives contact with an actual business.

That is why I believe multi-user agent design starts with boundaries, not bravado. And it is why we built Notis that way from day one.