Content

From Shipping Agentic Product Updates to Building GTM With AI

This week felt like one of those rare founder weeks where a dozen threads that used to feel separate suddenly started reinforcing each other.

On the product side, we are stabilizing a cinematic timeline and debug harness, a task manager, a live note editor, and a persistent desktop voice mode for beta. On the engineering side, we are tightening how coding agents work so they do not just write code, but also prove that the code survives reality. And on the GTM side, we are building the same kind of system for growth: one where context compounds, content gets reused, and the machine gets better every week instead of starting from zero every time.

If I had to summarize what is changing at Notis right now, it is this: we are moving from isolated AI tricks to a more operational kind of intelligence.

Cinematic timeline and debug harness illustrating how agentic product updates move from beta stabilization to production readiness.

The product is starting to feel coherent

A lot of product development looks messy from the outside because users only see the polished release, not the period where multiple pieces are being made reliable at the same time.

That is exactly the phase we are in.

We are stabilizing four important surfaces for beta this week:

A more cinematic timeline and debug harness

A task manager that makes follow-through more visible

A live note editor that makes AI-assisted drafting feel less brittle

A persistent desktop voice mode that keeps Notis available like a real working companion, not a one-off prompt box

The target is to push these changes toward production around April 20 if stability holds.

That date matters less than what it represents. The real milestone is not shipping features individually. It is making the product feel like one environment instead of a bundle of disconnected capabilities.

That is where a lot of AI products still fall apart. They demo beautifully, but they do not yet hold together as a working system. I care less about whether something looks magical in a clip and more about whether it keeps delivering after the tenth, fiftieth, and hundredth use.

We changed the rules for our coding agents

One of the biggest bottlenecks in AI-native product development is not code generation. It is validation.

It is surprisingly easy for an agent to write something that looks correct. It is much harder for that same agent to prove the change works across the actual system.

So we have been tightening the loop.

Our coding agents are increasingly expected to do four things before I trust the output:

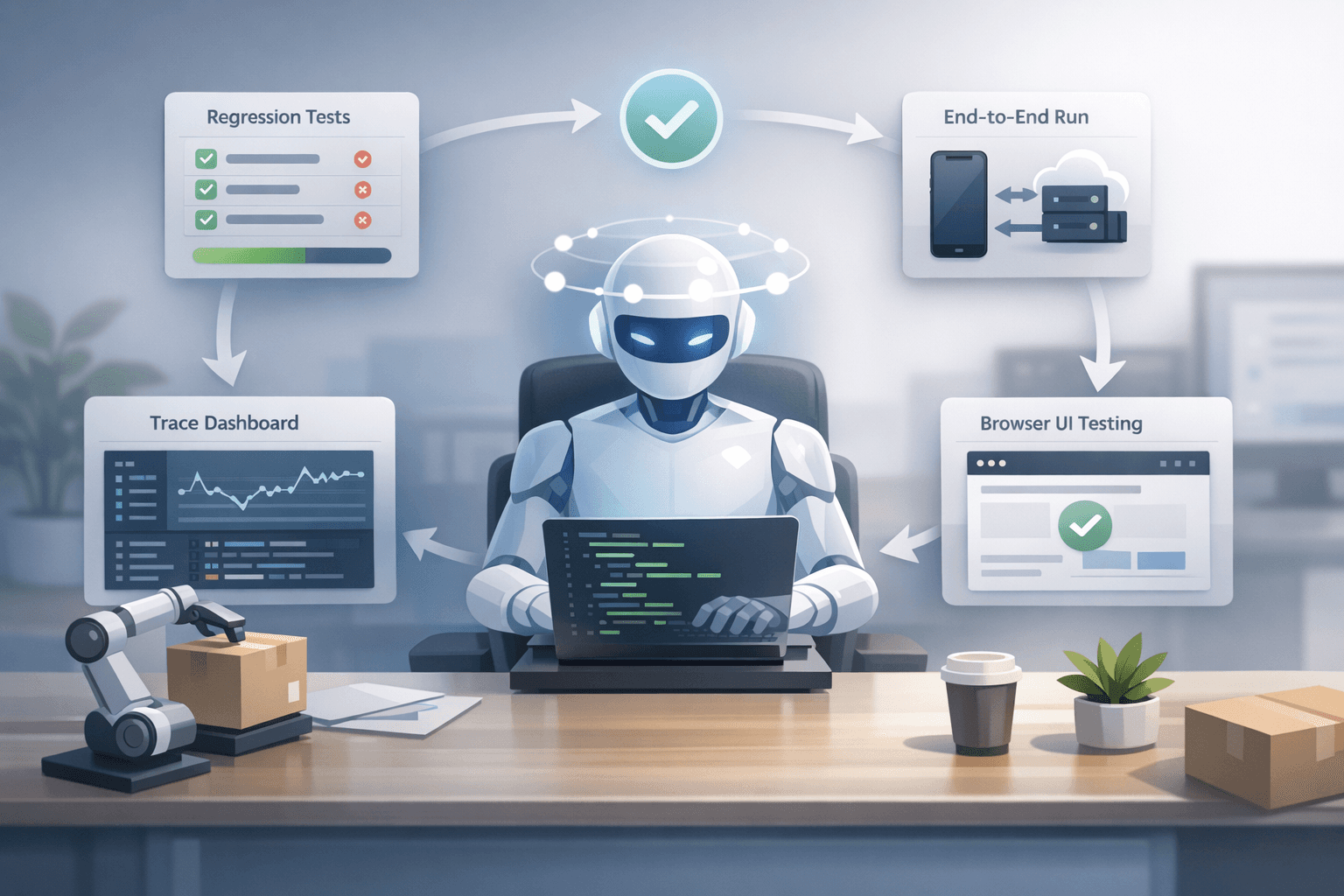

Write regression tests

Run end-to-end tests through real channels

Inspect Langfuse traces

Validate UI changes directly in the browser

Agent-driven product development: coding agents validate every change through tests, real-world runs, trace review, and browser checks before shipping.

That sounds obvious. In practice, it changes everything.

The moment you force an agent to verify its own work across real user paths, you stop treating it like an autocomplete engine and start treating it more like a junior operator. It still needs supervision. It still fails in strange ways. But it can now handle much longer autonomous work cycles without creating chaos.

We are starting to unlock stretches of 30-plus minutes where agents can work with much less manual interruption because the testing burden is no longer entirely pushed back onto humans.

That matters more than people think.

The real drag in agentic development is often not generation quality. It is the founder or engineer becoming the universal QA layer for every tiny change. Once that happens, the system stops scaling with your ambition.

The debug layer is becoming a product advantage

I have become increasingly convinced that observability is not a nice-to-have for AI products. It is part of the product.

If agents are going to do meaningful work, you need to understand not only what they produced, but how they arrived there, where they failed, and which assumptions broke along the way.

That is why trace inspection is becoming part of the development workflow rather than an afterthought. Langfuse is especially useful here because it gives us a way to inspect what happened inside a run instead of guessing from the output alone.

This is one of those boring-sounding foundations that becomes a strategic advantage over time.

The companies that win in agentic software will not just have the flashiest demos. They will have the best debugging discipline.

Reliability beats raw model hype

A lot of the AI conversation is still overly centered around model identity, as if picking the right model is the whole strategy.

Model choice matters, of course. Right now, I still have preferences depending on the kind of work:

Opus tends to be stronger for planning and UI reasoning

ChatGPT tends to be stronger for getting builds done

But those preferences only matter inside a broader system.

For example, we recently resolved an OpenAI rate-limit issue by implementing progressive backoff more carefully. That kind of work is not glamorous. Nobody shares a screenshot of it on X with dramatic music in the background. But it is often the difference between a product that feels dependable and one that feels randomly flaky.

The longer I build in this space, the less I believe the moat is any single model. The moat is the surrounding system: routing, observability, testing, recovery logic, memory, and interface design.

We are building GTM with the same agentic logic

The most interesting part of this week is that the GTM side is starting to mirror the product side.

We are syncing sources like Notion, Gmail, Drive, and websites so they can act as memory layers for downstream execution. That creates a much more durable context system than constantly briefing from scratch.

Once those sources are connected, an external agent can turn that context into:

Blog posts

Ads

Social content

Landing-page inputs

AI-powered GTM orchestration turns fragmented knowledge across tools into a continuous engine for content, campaigns, and growth.

This is not just a content efficiency play. It is a strategy for compounding context.

Most small teams do not actually lack ideas. They lack a reliable way to transform what they already know into consistent distribution.

That is why I am also experimenting with a web developer on a roughly $2,000 per month retainer to produce programmatic SEO landing pages. I do not see this as “doing marketing manually with a bit of AI on top.” I see it as building an AI-native GTM engine where structured memory, reusable context, and execution workflows reinforce one another.

And importantly, it is validating something bigger: there is real demand for native GTM capabilities inside Notis itself.

Users do not just want an assistant that captures thoughts. They want one that helps turn those thoughts into distribution.

AI GTM is not about spamming faster

There is a lazy version of AI-driven growth that basically says: produce more content, more ads, more pages, more noise.

I do not think that works for long.

The better version is to use AI to increase relevance and continuity.

If your memory layer includes founder thinking, customer language, product updates, prior positioning, internal docs, and real-world transcripts, then AI can help you produce distribution that actually sounds grounded. Not generic. Not detached. Not “content marketing” in the dead, corporate sense.

That is the difference I care about.

The goal is not to industrialize slop. The goal is to make a small team look more coherent, more consistent, and more prolific than its size would normally allow.

Why this all feels connected now

When I zoom out, three things that used to feel separate are starting to merge:

Product development

Agent reliability

Go-to-market execution

They all depend on the same core principles:

Good memory

Clear verification loops

Lower-friction interfaces

Systems that keep improving with use

That is why I am increasingly skeptical of AI products that focus only on a thin chat layer. The real value shows up when the assistant is plugged into your workflows, your traces, your channels, your documents, and your operating context.

That is also why I think the distinction between “product” and “operations” is going to blur. The companies that win will not just ship software. They will build systems that help them ship, learn, distribute, and improve faster than teams of similar size.

A final founder note

There was also a possible investor intro from Adrian in the mix this week, which I appreciate.

But to be honest, moments like this mostly reinforce the same belief I already had: fundraising matters far less than whether the machine underneath the company is getting stronger.

When I look at Notis right now, what makes me optimistic is not a single feature launch or a single conversation. It is that the loops are improving.

The product is getting more coherent.

The agents are getting more accountable.

The GTM system is getting more context-rich.

That is the real progress.

And if we get this right, the long-term value of Notis will not be that it helps you ask better questions. It will be that it helps small teams build execution systems that keep shipping while they sleep.

Action items and open questions

Here is what I am focused on next:

Stabilize the beta surfaces enough to support a production push around April 20

Keep increasing the verification standards for coding agents

Turn memory-connected GTM workflows into repeatable internal playbooks

Learn whether the strongest pull is still productivity, or whether AI-native GTM is becoming an equally important wedge

The interesting question is no longer whether AI can generate useful output.

It clearly can.

The question is whether we can build systems where that output compounds into product momentum, operational leverage, and real distribution.

That is the game I think is worth playing.