Content

AI-Native Media Buying: The End of Manual Ad Ops

I think one of the biggest tells that a workflow is broken is how painful it feels to go back to it once you’ve worked with agents for a while.

That is exactly how I now feel about traditional ad operations.

Once you’ve had agents helping you research competitors, structure campaigns, prepare creative production, and move information cleanly across tools, opening a bloated ad dashboard starts to feel like going backward. Not because dashboards are useless, but because they were built for a human operator to click through a maze of tabs, duplicate work by hand, and keep the entire system in their head.

The real shift happening in AI-native growth is not “AI can generate ad images now.” That part is already commoditizing. The interesting part is everything around the image. The before. The after. The workflow.

The real problem was never just creative production

A lot of people still talk about AI media buying as if the win is generating one more variation of an ad in ChatGPT or Gemini.

That is not the real opportunity.

The real opportunity is turning media buying into an orchestrated system.

Before you create anything, you need a way to discover what is already working. That means identifying high-signal competitor ads, saving the right swipe files, and understanding the patterns behind them instead of randomly collecting inspiration. After you generate something, you need a safe way to move it into a content pipeline, validate it, publish it, and later connect performance back into the loop.

That is where most teams still break down. They have isolated tools, scattered screenshots, half-documented ideas, and a publishing process held together by memory and browser tabs.

When people ask me where the real product value is, my answer is increasingly simple: not in raw generation, but in workflow compression.

Research becomes more useful when agents can operate it

The first part of an AI-native ad workflow is research.

Not passive research. Operational research.

If an agent can look through competitor ads, identify recurring hooks, pull the strongest examples into a swipe file, and structure them in a way that is reusable, the entire quality of downstream work changes. You stop starting from a blank page. You stop relying on memory. You stop confusing “more inspiration” with better signal.

At that point, saved research becomes infrastructure.

This is why I find tools like MCPs so interesting in this space. If an agent can access a clean interface for research, publishing, or campaign setup, it can do real work without depending on fragile browser automation. That matters a lot. Browser automation looks impressive in demos, but the moment a layout changes, your “automation” turns into technical debt.

API-first workflows are much less sexy on the surface, but much more durable in production.

That durability is the difference between a demo and an operating model.

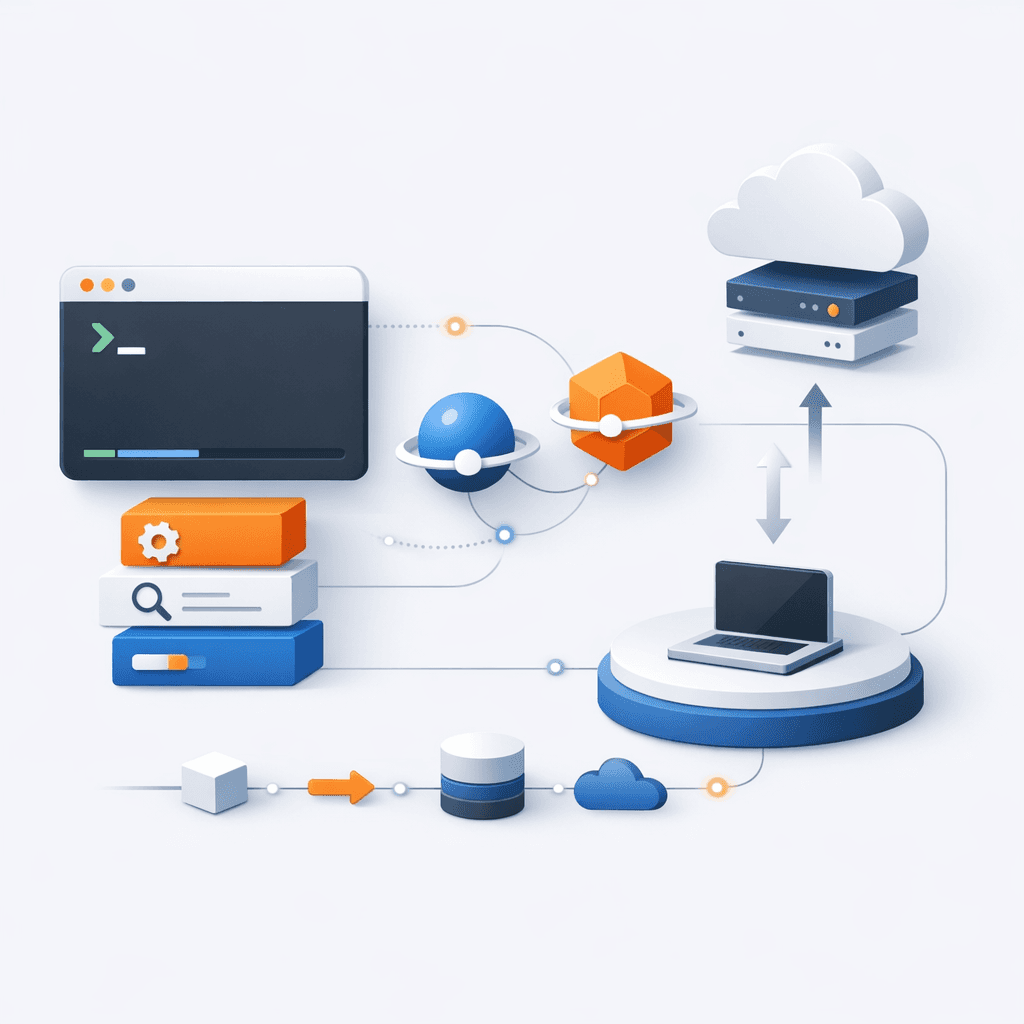

The winning stack looks more like CLI plus skills than one giant app

Another pattern I keep seeing is that agent-native products work better when they start with a strong command layer.

In practice, that often means a CLI first.

Why? Because a CLI gives you a reliable operational surface. It is composable, scriptable, and much easier to connect to agents than a purely visual interface. Then you add lightweight skills on top of it, rather than trying to cram every behavior into one monolithic app.

This is also how I increasingly think about distribution for AI-native software. The product is not just the interface. The product is the install path, the sync layer, and the operational consistency across environments.

At Notis, I’ve been obsessed with reducing that friction. If a user works across Claude, Codex, and Cursor, they should not have to manually rebuild the same setup three times. The CLI should manage installation. The system should sync local skills to the cloud. The plumbing should disappear.

That sounds like a product detail, but it is not. It is the difference between a tool people try once and a tool they actually integrate into their daily work.

The future of software distribution for agents will probably look less like “log into this dashboard” and more like “install a capability, sync it everywhere, let the agent use it safely.”

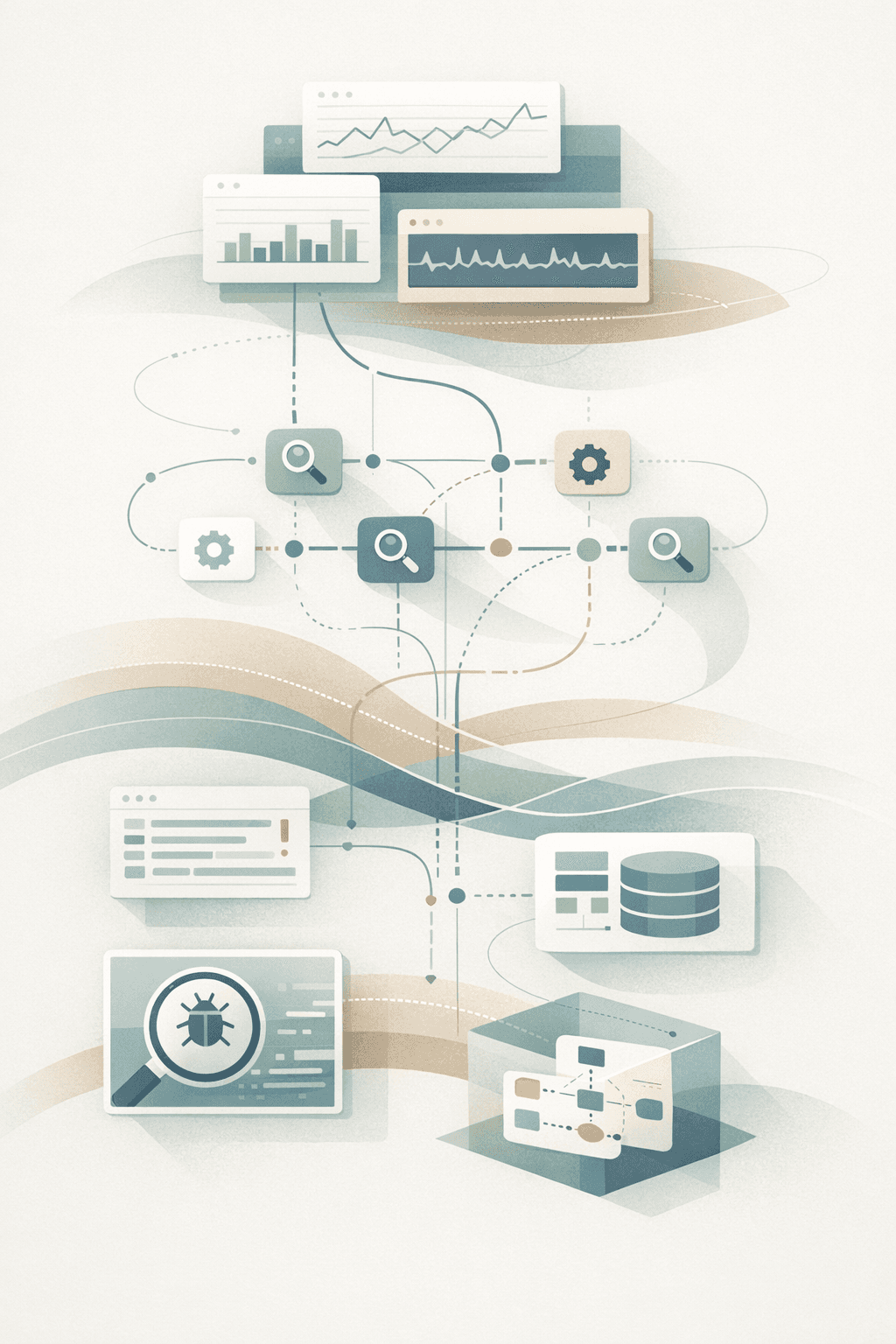

Observability is not optional once agents touch revenue workflows

One thing that becomes obvious the minute you run real agent workflows in production is that debugging gets weird very fast.

When a human makes a mistake in a dashboard, you can usually retrace the clicks.

When an agent makes a mistake across multiple tools, the failure can live anywhere: the prompt, the tool call, the missing context, the response format, or the handoff between steps. If you do not have observability, you are basically guessing.

That is why I care so much about tracing.

In production, I use Langfuse to trace inputs, outputs, and tool calls. It makes support dramatically easier. Instead of vague back-and-forth trying to reconstruct what happened, you can inspect the actual flow. You can see which tool was called, what context the agent had, where the reasoning broke, and what needs to be fixed.

That changes how you build. It also changes how confident you can be when agents start touching more sensitive workflows.

A lot of teams still treat observability like a later-stage concern. I think that is a mistake. If an agent is part of your revenue engine, tracing is not a nice-to-have. It is operational hygiene.

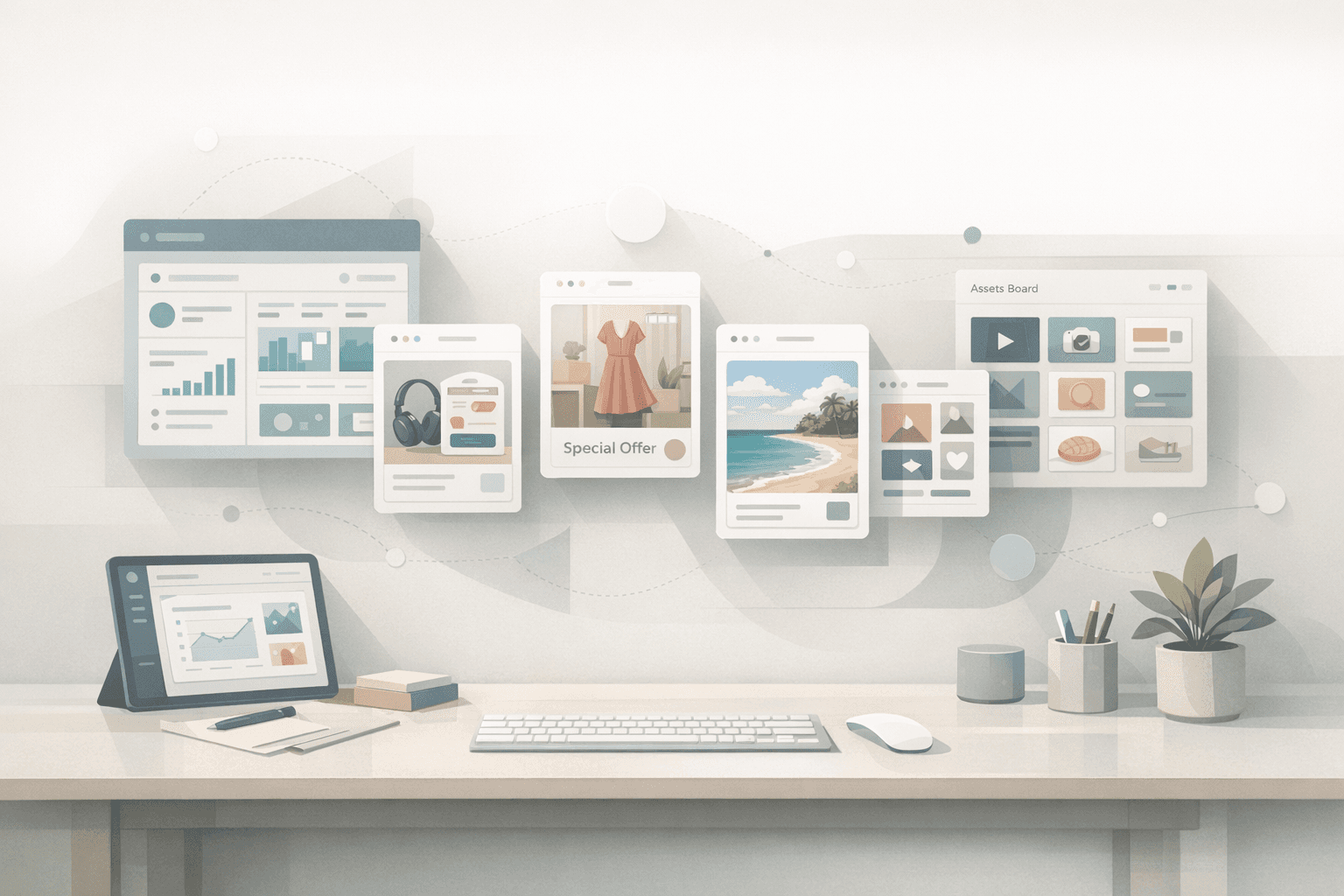

The most interesting use case is the closed-loop growth system

The workflow I find most compelling is not a single-generation moment. It is a loop.

Imagine this.

A high-signal swipe file gets saved. That triggers a recurring automation. The automation generates new creative directions based on the saved patterns. Those outputs get logged into a content pipeline. Validation happens before anything is published. Once approved, the assets move into campaign management and eventually back into analysis.

Now you are no longer “using AI to make ads.”

You are building a growth system where research, production, publishing, and learning are connected.

That is the bigger message I keep coming back to: the future of growth work is not AI content generation in isolation. It is end-to-end orchestration.

And that orchestration matters because growth work is full of hidden coordination costs. People lose time between tools. Ideas die between meetings and execution. Winning ads get noticed too late. Publishing gets delayed by tiny operational bottlenecks. Analysis happens too far downstream to improve the next cycle.

An agent-native workflow compresses those gaps.

Manual ad ops will not disappear, but their center of gravity will

I do not think humans disappear from the process.

I also do not think serious teams should blindly hand over media buying to autonomous systems and hope for the best.

But I do think the center of gravity is changing.

Humans should define constraints, approve strategy, and make the high-leverage calls. Agents should handle the repetitive research, structure the inputs, move information across systems, and reduce the operational drag that slows everything down.

That division of labor makes much more sense than the current setup where talented operators spend a ridiculous amount of time doing work that is procedural, cross-tool, and frankly exhausting.

The teams that win will not be the ones with the fanciest prompt for image generation.

They will be the ones who design the cleanest systems around it.

What this means for founders building in AI

If you are building for marketers, media buyers, or operators, I think the takeaway is straightforward.

Do not obsess only over the generation layer.

Obsess over the workflow layer.

Make research reusable. Make publishing safe. Make installation painless. Make skills portable. Make tracing default. Build the orchestration fabric, not just the sparkly demo on top.

Because once people start working with agents all day, they do not want more dashboards.

They want systems that feel like momentum.

And in media buying, momentum comes from closing the loop faster than everyone else.